Moving from Static AI Literacy to an Always-on Learning Arena

Here's how to create living learning infrastructure that keeps up with AI.

At Unleash America last week, I watched a session on Marriott’s approach to retraining 1,000,000 employees and found myself nodding along with the energy in the room. I appreciated that they were talking about how to move learning through a large, distributed organization, not just what to teach.

It’s the right question. And most enterprises I talk to aren’t asking it.

The dominant model for AI learning in large organizations right now looks something like this: leadership identifies a skill gap, L&D builds a curriculum, a platform gets licensed, cohorts get scheduled, modules get completed, and certificates get issued. The box is checked. The program is declared a success.

The speed of AI advancements turns this training model on its head.

Six months after traditional AI training, the capabilities that felt cutting-edge in Q1 are table stakes in Q3. The workflows that employees just learned have already shifted. And the organization’s response simply can’t be to build another training program.

This is the training trap. And understanding why it fails is the first step toward building something that actually works.

The Structural Mismatch of Learning AI

There’s a structural mismatch at the heart of enterprise AI learning that most organizations haven’t fully confronted: the tools are evolving faster than the training cycles.

The expiration date on technical training used to be measured in years. A PowerPoint certification from 1997 was still largely valid in 2002. An Excel course from 2008 didn’t require a major overhaul by 2010. The tools were stable enough that a discrete learning event such as a workshop, a certification, or a cohort could remain relevant long enough to justify the investment.

With AI, that expiration window has compressed to months, if not weeks. GPT-4 to o3. Claude 2 to Claude 4.6. Each release changes what’s possible, what’s expected, and what employees need to understand about their own work. A training program built on last season’s capabilities teaches people to think about AI’s possibilities in terms that no longer describe what the tool can actually do.

The World Economic Forum projects that 59% of the global workforce will need reskilling by 2030. But the implication buried in that statistic is that reskilling is not a project with a finish line. It is a permanent operating condition. The moment you complete a comprehensive training rollout, the clock on its obsolescence has already started.

And yet, organizations keep reaching for the training program because it is the most familiar shape for learning. It has a sponsor, a budget, a timeline, and a completion metric. It looks like progress. It produces certificates.

What it doesn’t produce is an organization that knows how to keep learning as the landscape shifts.

So… How Can We Keep Up with AI?

The real question becomes whose job is it to keep up with AI?

In most organizations, the honest answer is everyone’s, which means no one’s. Keeping up with AI is a near full-time job. And everyone in the organization is full up. The CAIO has a strategy to manage. The business unit leaders have quarterly targets to hit. The managers have teams to run. Staying current on AI model developments and their implications for our workflows is on everyone’s list…and nobody’s actual job.

The result is predictable. The organization’s working knowledge of AI capabilities drifts behind the actual state of the tools. People use yesterday’s mental models to make today’s decisions. Competitive advantage erodes from a slow accumulation of not-quite-current understanding.

PwC recognized this problem early and built something different. Rather than rolling out uniform AI training to their entire workforce, they created a network of AI champions who were explicitly responsible for staying current on AI capabilities, translating that knowledge for their specific business context, and getting it into the hands of their peers. Prompting Parties, highly interactive, peer-led sessions where employees experiment with generative AI on real work problems, generated over 400 requests for additional sessions in the first few months and reached more than 22,000 employees.

PwC didn’t try to keep everyone equally current on everything. They built a distribution infrastructure for learning. A network of informed nodes who could translate new capability into relevant context for the people around them.

That’s a fundamentally different architecture than a training program.

The AI Learning Flywheel

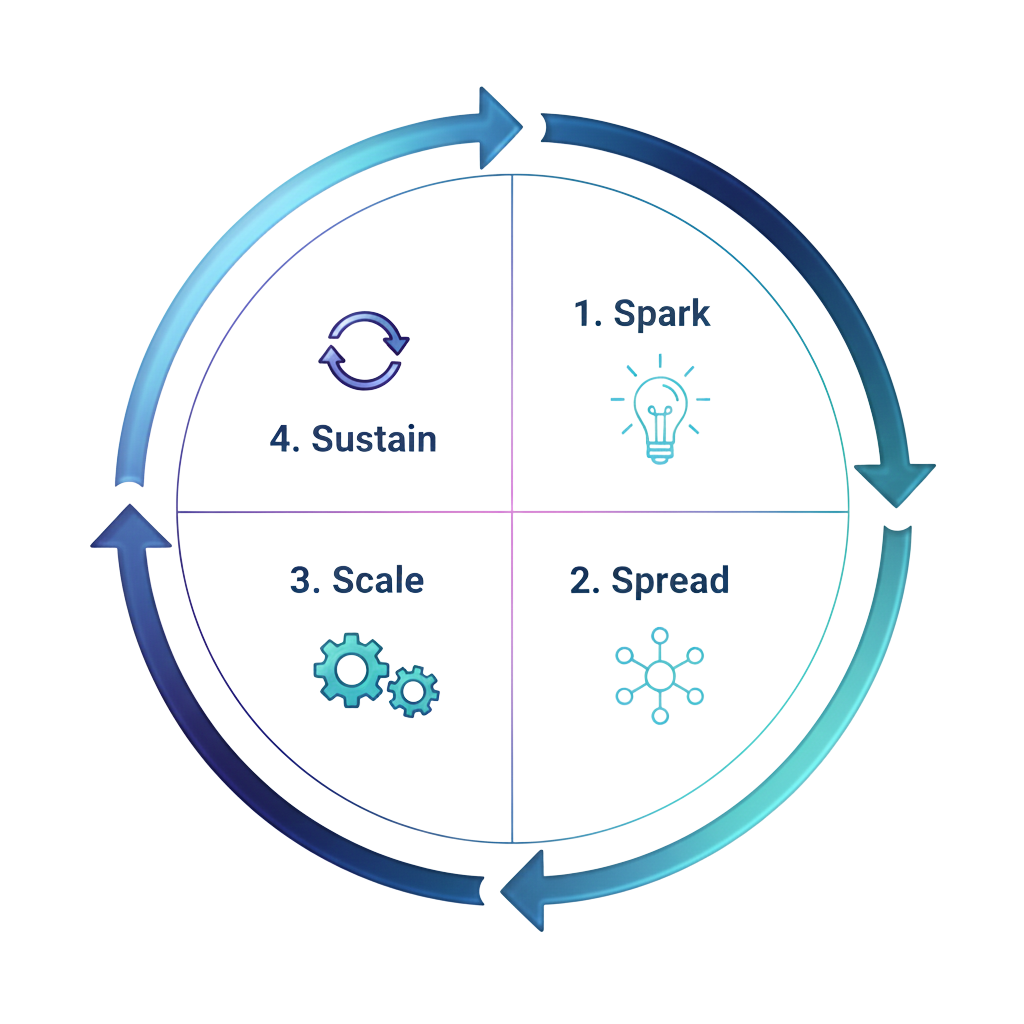

In the Hyperadaptive Model, this architecture has a name: the AI Learning Flywheel. And it runs on three interconnected layers.

AI Activation Hubs are the sensing layer. A team (or network of teams in large organizations) whose job includes monitoring AI advancements, evaluating their implications for specific functional contexts, and atomizing that knowledge into micro-bites of learning that can actually travel through the organization. This is the group that absorbs the complexity so everyone else doesn’t have to. They are, in effect, the organization’s immune system against AI-obsolescence.

The AI Leads are the distribution layer. Embedded in business functions, AI Leads receive the contextualized knowledge from the Activation Hub and get it into the hands of their peers. They’re function-specific translators who understand both the capability and the workflow it affects. And they’ve been trained on the most effective way to spread knowledge. Legal AI Leads understand legal workflows. Finance AI Leads understand finance workflows. That context is what makes the learning land.

The Communities of Practice are the integration layer. Informal at first, increasingly structured over time, these communities are where practitioners share what’s working, surface what isn’t, share learnings with the Activation Hubs, and build the collective intelligence that no individual node could develop alone. During Stage 3 of the Hyperadaptive journey, some form around technical challenges, such as “how do we build effective learning loops into our customer service automations?” Others address human concerns: “what skills should we develop as our roles evolve?”

Together, these three layers create a flywheel: new capability enters through the Activation Hub, gets contextualized by AI Leads, gets applied and stress-tested by practitioners, and the resulting insights flow back up through the network. The flywheel is not a program with a start and end date. It is an operating rhythm.

What This Looks Like in Practice

Let me make this concrete. Anthropic releases it’s newest model, Claude 4.6. For most organizations, the response is: someone reads the release notes, maybe a Slack message goes out, and 95% of employees continue using AI the way they were using it last quarter.

In an organization with a functioning Learning Flywheel, it plays out differently. The AI Activation Hub in, say, the legal function assesses the specific implications of the 4.6 release for how legal currently uses AI, including what changed, what’s now possible that wasn’t, what previous workarounds are now unnecessary. They spin up a brief, contextualized update, including a short video, a one-pager, a practical example. It goes to the Legal AI Leads, who demo it to their peers.

With this approach, the legal team’s working mental model of their AI tools updates in days, not quarters. No enterprise-wide training program required. No cohort scheduling. No completion metrics that have nothing to do with whether anyone actually changed how they work.

What ‘always on’ learning requires is investment in the learning arena. The good news? You can upskill and redeploy existing people to make this happen.

Creating dedicated ways for learning to flow is what it means to have a learning infrastructure rather than a learning program. The infrastructure is always on. The knowledge flows continuously. The organization doesn’t fall behind between training cycles because there are no training cycles. Rather, there is the ongoing rhythm of sense, translate, distribute, apply, and feed back.

What Does Your Organization Look Like?

Take a moment to map how your organization currently handles a major AI model release. Who finds out first? How long does it take to reach the practitioners actually using the tool? What determines whether they update how they work, or whether they keep doing what they were doing? Who is responsible for that answer?

In most organizations, that map reveals a learning infrastructure that stops at the awareness layer. People know something has changed, but the knowledge doesn’t reliably travel to the people who need to act on it, in the context that would make it actionable.

The WEF’s Future of Jobs Report 2025 found that 52% of leaders now rank job redesign as their top workforce priority. That tells you the work itself is the moving target. An organization where the learning infrastructure can’t keep pace with tool evolution is already losing ground on the larger challenge of work redesign.

Building the flywheel is not a technology project. It requires designated people (Activation Hubs, AI Leads), a communication rhythm, and the cultural commitment to treat learning as infrastructure rather than event. It requires, at minimum, asking: who in this organization is it actually someone’s job to keep up with AI? And then building the distribution system that lets their knowledge travel.

Final Thoughts

The organizations winning with AI are the ones where new capability travels fastest from the people who understand it to the people who can act on it.

That’s a distribution problem. And distribution problems require infrastructure.

The training program made sense in a world where the tools were stable and the gaps were bounded. In a world where the models evolve every six months, where the workflows are continuously redesigning, and where the humans responsible for judgment calls on AI-generated output need to be getting sharper continuously, the training program is a coping mechanism dressed up as a strategy.

That’s not to say we don’t need our L&D professionals. We do. We just need them in a different way. Embedded in Activation Hubs. Continuously atomizing the learning.

Build the flywheel. Designate the sensing layer. Enable the translators. Create the communities. And then let learning flow through the organization the way AI moves through your processes: continuously, contextually, and without a finish line.

Melissa Reeve is the founder of Hyperadaptive Solutions and author of the forthcoming Hyperadaptive: Rewiring the Enterprise to Become AI-Native (IT Revolution Press / Simon & Schuster, May 2026). The Hyperadaptive Model helps Fortune 500 enterprises build the infrastructure for AI-native operations. Pre-order your copy at hyperadaptive.solutions/book.

SPECIAL OPPORTUNITY

The first paid community peer roundtable is coming up on April 16th: Getting Your Organization to Agree on What ‘Adopting AI’ Means. RSVPs are already rolling in, and I’m genuinely excited about the group that’s forming. If you’ve been thinking about joining the paid side of Hyperadaptive, this is a great reason to do it. Small room, real talk, no fluff. Upgrade to paid to access.

Organizations need a system, not just a course, so employees can keep learning and using AI as it changes